More and more, people building web sites does use a robots.txt file at root level to provide guidances to web crawlers.

Since recently, people start to use a similar mechanism to provide info about the site. Because those info are for real humans and not robots anymore, the funy approach is to use a humans.txt file at same location than robots.txt.

Quick reminder about robots.txt

robots.txt have been invented in 1994 and became a standard to kindly suggest web crawlers where to go within the site and where not to go.

Off course, this is just a suggestion and it’s up to the crawlers to obey or not to the site crawling policy. Web site could still identify malicious web crawlers and block their access. But most of the majors search engines do follow robots.txt policity such as DuckDuckGo, Baidu, Bing, Google, Yahoo! or Yandex.

A basic format of robots.txt that restrict to some site area is:

User-agent: *

Disallow: /cgi-bin/

Disallow: /tmp/

Disallow: /junk/

Disallow: /directory/file.html

If you don’t specify any robots.txt file, it’s the equivalent of the followig:

User-agent: *

Disallow:

It’s interesting to see that Google robots.txt have a lot of disallowed area while Apple robots.txt does not! It might be that declaring area you don’t want crawlers to go is the exact area where some malicious web crawlers wants to go? And it’s a good way for them to discover such area.

From robots.txt to humans.txt

When building a website, you can use name meta tags to provide info about the site (like author) in

<head>

<meta name="description" content="talking about humans.txt">

<meta name="keywords" content="humans.txt, robots.txt">

<meta name="author" content="Olivier HO-A-CHUCK">

</head>

But sometimes, list of contributors could be long and you might want to list everybody and also provide more info about the site.

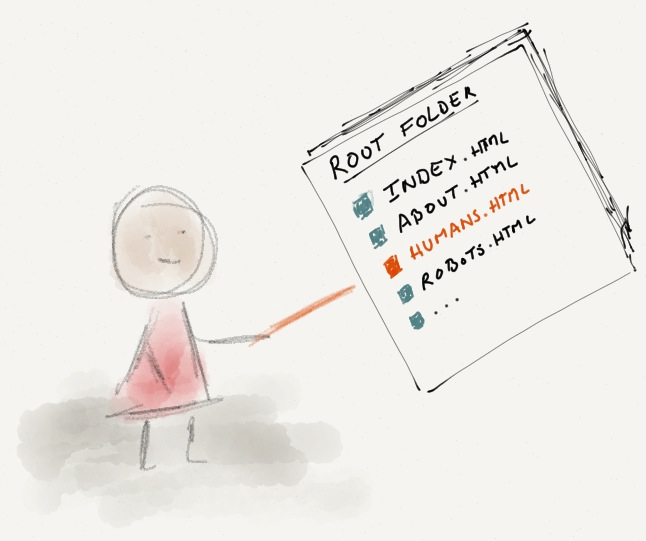

Some had a genious idea to propose per analogy of robots.txt file to set at same web site root location a humans.txt file.

This file is then a simple way to provide technical information about the site. Like who’s developping it, what technical solution is used, or any information like that.

In fact, it’s a simple way to give a human to human info like would do robots.txt for providing with human to robots info.

humans.txt suggested format

There is a generic suggested format given by humanstxt.org:

/* TEAM */

Your title: Your name.

Site: email, link to a contact form, etc.

Twitter: your Twitter username.

Location: City, Country.

[...]

/* THANKS */

Name: name or url

[...]

/* SITE */

Last update: YYYY/MM/DD

Standards: HTML5, CSS3,..

Components: Modernizr, jQuery, etc.

Software: Software used for the development

Some sites does use this format like Github, html5boilerplate, twitter, Mozilla.org or Gizmodo. Humanstxt.org does list some others.

In the mean time, you can find out there some fency ones, using their own format. Most of the time it is used to provide job offers link. Indeed, the kind of person that would look for humans.txt might be the kind of person you might want to recruit … :)

Some few examples:

Chrome and Firefox extensions

If you want to check from times to times who use a humans.txt file, some browsers have extensions to automatically detect presence of such file. There are for instance a Chrome extension or a Firefow extension.